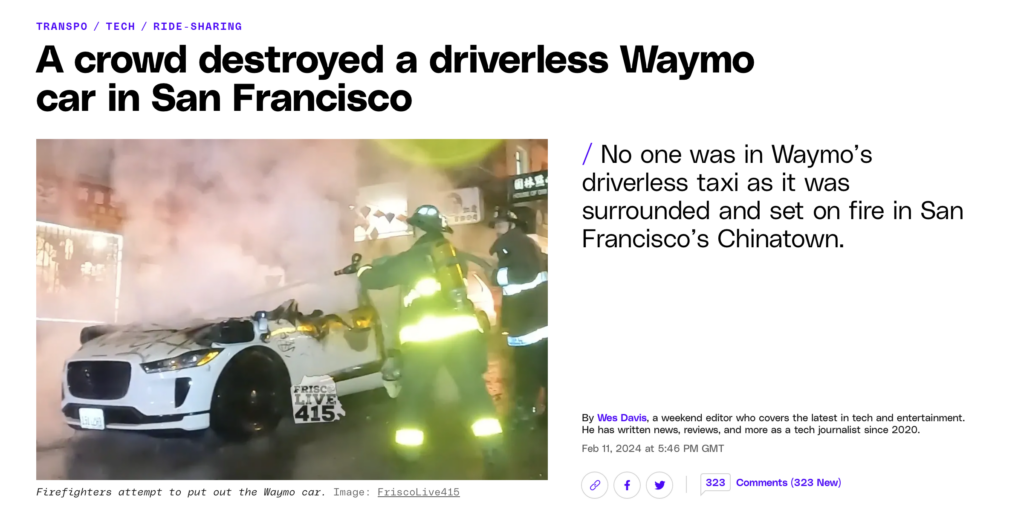

Just a typical Friday night in San-Fran. A Waymo driverless taxi stops in the street. A guy jumps on the bonnet and smashes the windscreen. A crowd forms, and cover the car in spray paint, break its windows, and set it on fire.

I’ve been thinking a lot about cars as I’ve written God-like my forthcoming book on AI. It may seem like an odd connection, but I’m more and more convinced that the situation we’ve found ourselves in trying to handle the technology of cars – and the enormous sociocultural influence that this very particular tool has – has a lot to teach us about how AI is going to impact us.

I mention in the book that, after an event in the UK parliament a few months ago, I got chatting to a post-doctoral researcher who’d done his PhD on driverless cars. He was clear: they are the next frontier in concerns about data privacy. Many new cars measure how tightly you are gripping the steering wheel, track where you are looking, know if you have a baby-seat installed. All new cars know exactly where you are, and what speed you are going.

But what do they do with that information? What they don’t do is share it with the police, or other authorities. This is for very good privacy reasons, but it has created the slightly absurd situation where you can drive at 50 mph down a residential street where children are playing, and you know it, your phone operator knows it, your car knows it and Google / Waze / Garmin knows it… but – unless the police happen to set up a speed trap – nothing is going to get done.

Cars are incredible machines that have amplified our ability to overcome geographic limitations. But they have also become a curse. The freedoms that they have afforded so many have come at a quite staggering cost. The brutal infrastructure of roads. The noise. The fumes that are causing thousands upon thousands of early deaths each year in my home city of London alone. The road rage. The traffic jams. The speeding along rat-runs. The fact that – as Jane Jacobs has expertly argued in The Death and Life of Great American Cities – cars take walking-pace people off the streets, and thus allow social unrest to rise unchecked as more people vacate public space.

As I write in the book:

[Cars] are over-engineered technologies that offer a far greater amplification than we could ever realistically need… but they have reached so far inside us that they are no longer utility vehicles at all. They are symbolic expressions of power, often most for those whose actual power has been oppressed for so long.

In short, cars have become highly personal technologies that we have become socially and emotionally dependent on, which have eroded the social capital of our communities.

It is very possible that, unregulated, AI tools will become similarly symbolic expressions of power. Over-engineered far beyond their core utility, they will allow users to ‘move fast and break things.’

Because regulation of cars has been so poor, brute-force measures have had to be put in place. A speed bump is a physical interruption, a desperate intervention because people simply refuse to obey speed limits.

With AI, if we get regulation wrong, we will be putting incredibly powerful machines into people’s hands. The benefits will be huge – releasing people from all kinds of limitations – but the costs to our communities could be equally large.

My worry, based on the experience of car culture, is that we risk bowing to the forces of tech power… and that this could – like the speed bumps and other crude interventions – mean that things get ugly as we try to control things in other ways.

This won’t be last AI-fuelled taxi to get burned.

Pre-order God-like here.